Key Takeaways

- Coding agents now write code, call tools, and take autonomous actions at scale, creating governance gaps most organizations haven't addressed.

- 80% of developers admit to bypassing security policies when using AI coding tools. The adoption curve is steep. The governance gap is steeper.

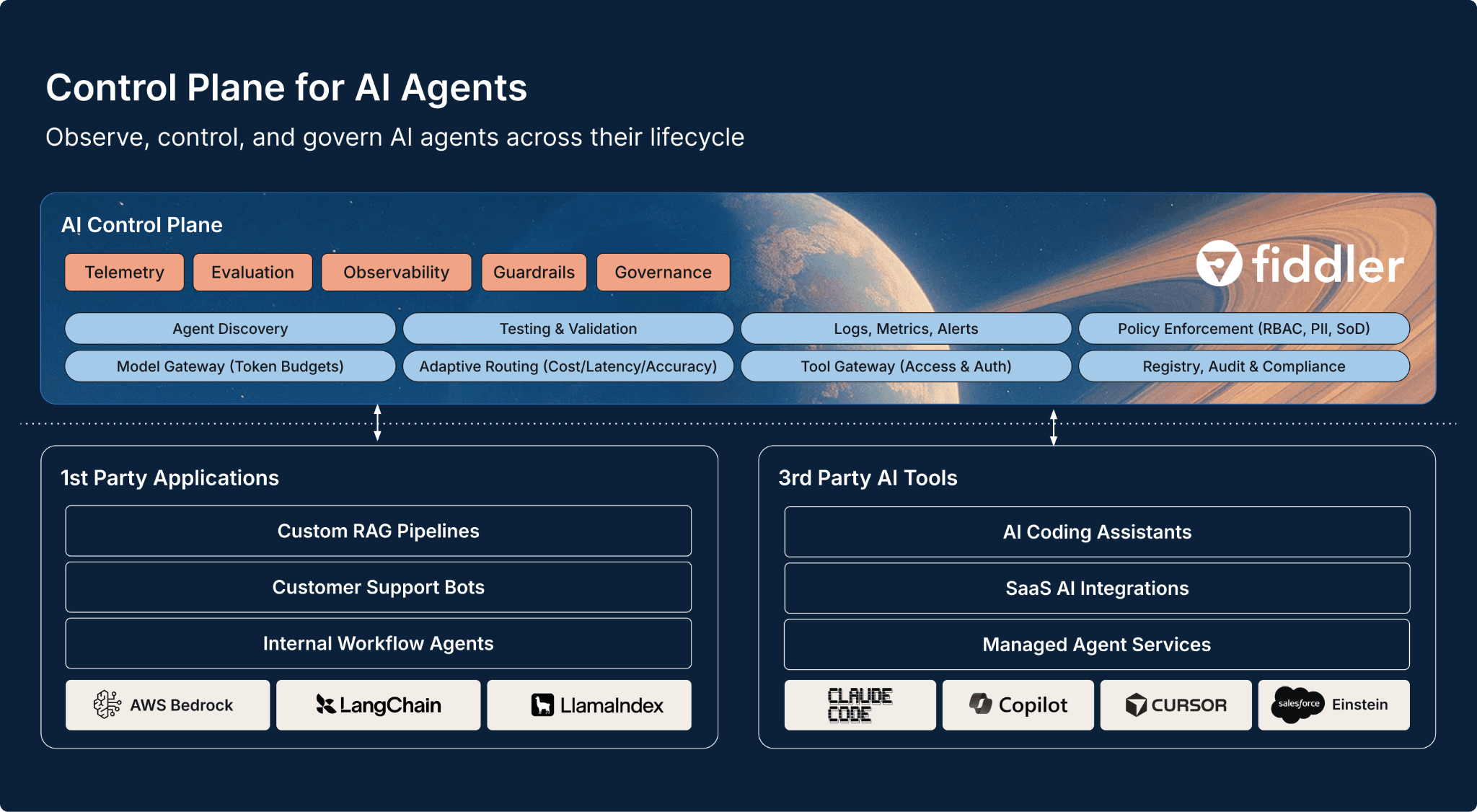

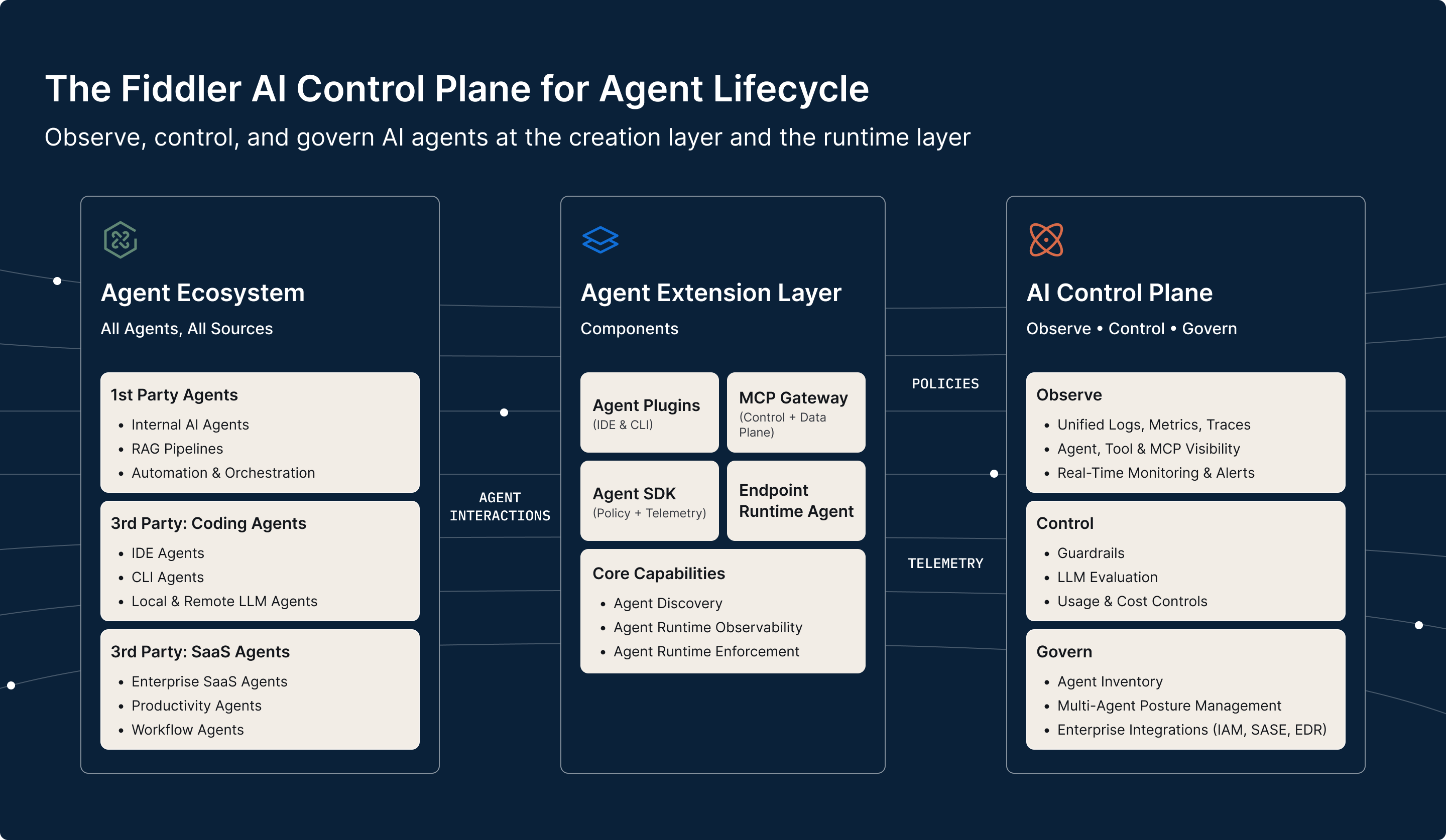

- Fiddler acquired Lumeus to extend its AI Control Plane to coding agents operating in the IDE, CLI, and MCP boundary.

- The combined platform spans the full agent lifecycle, from code generation through production.

- Design partners get early access to the combined platform.

We are excited to share that Fiddler has acquired Lumeus, the solution for agent posture management, policy enforcement, and security at the code generation layer.

This extends the Fiddler AI Control Plane into the fastest-moving and highest-risk area of agentic AI - the coding agent workflow. With Lumeus, our AI Control Plane will observe, control, and govern agent behavior from the moment code is generated all the way to production.

No other company in the market can deliver visibility, context, and control at both the creation layer and the runtime layer. Together, we now span the lifecycle of coding agents from code generation to production behavior.

The Governance Shift From Models to Agents

AI systems are no longer models behind APIs. They're agents that write code, call tools, and take actions autonomously. The agent harness, including tool use, context management, and memory, now matters as much as the model itself. [1]

At Fiddler, we've been building toward this moment since our Series C, when we committed to the AI Control Plane. That thesis is playing out faster than we expected and nowhere faster than in coding agents.

- 84% of developers are using or planning to use AI tools in their development process, with over half using them daily. [2]

- 80% admit to bypassing security policies when using AI coding tools. [3]

- Most organizations lack end-to-end governance for agent-based systems. [4]

As per Anthropic, developers now integrate AI into 60% of their work, yet most organizations have no lifecycle governance in place for agentic systems. [5]

The adoption curve is steep. The governance gap is steeper.

How We Got Here

It started with a conversation two years ago. Fiddler has been steadily building the runtime AI Control Plane. Lumeus was securing workflows where coding agents actually operate in the IDE, CLI, and MCP boundary.

Every few months, we compared notes. Between us, we'd spent 1,000+ hours with CISOs and CIOs. The same questions were being raised. How many coding agents are active across our organization? Are they running on endpoints or in the cloud? What policies govern them?

Fiddler gives enterprises visibility to agents in production. Lumeus' customers could enforce policy where coding agents operate but couldn't extend beyond the development environment. Similar blind spots. Different vantage points.

The control plane has to span both environments - development and production - or it isn’t a control plane at all.

It is a Control Problem

Traditional model risk was about prediction quality. Agentic risk shows up in execution, not output.

A coding agent can write unsafe code. It can expose secrets in generated output. It can call the wrong tool, or the right tool at the wrong time. It can follow malicious instructions embedded in its context window through prompt injection. It can access files, APIs, and enterprise systems with whatever permissions it inherits, often broader than any human developer would be granted. And it can do all of this in seconds, at scale, across every repository in an organization.

Simon Willison, who coined the term "prompt injection," describes the core risk as any system combining access to private data, exposure to untrusted content, and the ability to communicate externally. [6] Coding agents routinely hit all three. Every workflow is simultaneously an attack surface, a compliance boundary, and a governance gap.

The question is no longer whether a model's response looks correct. It's whether an autonomous system is doing the right things, with the right boundaries. That's a control problem, and it requires a control plane that starts before production.

Why the Control Plane Must Span the Full Lifecycle

Every platform transition produces a control layer: cloud, containers, networking, security. AI is following the same pattern, but these systems are probabilistic and adaptive. An agent's behavior depends on what it can access, how it interprets its context, and what controls exist around its actions.

Willison put it bluntly: “We’re due a challenger disaster with respect to coding agent security… so many people, myself included, are running these coding agents practically as root.” [7]

If you want to govern agentic systems, you cannot start in production. You have to shape behavior at the point where agents write code, access tools, and establish the patterns that later become runtime behavior. CISOs, CIOs, and platform teams need one shared view of how every agent in their organization is behaving, whether it's a first-party application, a third-party agent, or a coding assistant.

The AI Control Plane: The System of Trust Begins at Code Generation

In networking and cloud infrastructure, the control plane is the layer that sits above the data plane and decides what's allowed. It routes traffic, enforces policy, manages identity, and provides a single source of truth for how a system behaves. It doesn't replace the components below it. It governs them.

The AI agent ecosystem has no shortage of point solutions: observability tools, eval frameworks, guardrail libraries, security scanners, governance platforms. Each is necessary. None is sufficient. As Martin Fowler notes, the fundamental security weakness of LLM-based agents isn't any single vulnerability. It's the combination of capabilities that creates systemic risk. [8] Agents operate across creation, execution, and production. A tool that sees one slice cannot govern the whole.

The control plane connects these capabilities into a single system of trust. It enforces a policy at code generation time and verifies compliance in production. It observes an agent's runtime behavior and feeds those signals back to shape how future code is written.

What We’re Building Next

With Lumeus, the Fiddler Control Plane for AI will span the full agent lifecycle. It covers first-party applications, third-party agents, and coding assistants. And it treats agent behavior not as something to inspect after the fact, but as something to shape continuously.

The Stack Overflow engineering blog recently made the point plainly: coding guidelines for AI agents need to be more explicit, more pattern-driven, and more continuously enforced than anything built for human developers. [9] That's not a documentation problem. It's a governance problem, and it's exactly what the control plane is designed to solve.

The control point in AI is shifting from models to agents, from runtime to the code generation boundary. If you can control how agents write code and what they're allowed to do, you're not monitoring AI. You're governing it.

Join Our Design Partner Program

We're selecting design partners for early access to the Control Plane for Coding Agents. If you're a CISO, CIO, or platform leader deploying coding agents at scale, we want to build with you. Early partners get hands-on access before GA, direct input into the product roadmap, and a direct line to our team. Request design partnership.

References

- S. Raschka, “Components of a Coding Agent,” Ahead of AI, April 2026. https://magazine.sebastianraschka.com/p/components-of-a-coding-agent

- Stack Overflow Developer Survey, 2025. https://survey.stackoverflow.co/2025/

- Snyk, The State of AI Code Security, 2025.

- Gartner AI TRiSM, 2025

- Anthropic, “2026 Agentic Coding Trends Report,” January 2026. https://resources.anthropic.com/2026-agentic-coding-trends-report

- S. Willison, “The Lethal Trifecta for AI Agents,” simonwillison.net, June 2025. https://simonwillison.net/2025/Jun/16/the-lethal-trifecta/

- S. Willison, “LLM Predictions for 2026” (Oxide and Friends), January 2026. https://simonwillison.net/2026/Jan/8/llm-predictions-for-2026/

- M. Fowler, “Agentic AI and Security,” martinfowler.com, 2026. https://martinfowler.com/articles/agentic-ai-security.html

- Stack Overflow Blog, “Building Shared Coding Guidelines for AI,” March 2026. https://stackoverflow.blog/2026/03/26/coding-guidelines-for-ai-agents-and-people-too/